Meta's No Language Left Behind model announcement triggered investor withdrawals from African language startups, forcing several organizations working on 55 African languages to shut down. AI ethics researcher Timnit Gebru reported that investors told these startups Meta had "solved" translation, making their specialized work obsolete.

OpenAI representatives have approached small language AI organizations with a stark message: collaborate for minimal payment or face obsolescence. "OpenAI is going to put you out of business soon because we're going to make our models better in your language," representatives told organizations, according to Gebru. The companies offered to purchase data for what sources described as "peanuts."

This pattern extends beyond individual cases. When any Big Tech company announces models covering specific languages, investors pressure smaller organizations in those markets to close operations. The dynamic creates a barrier for purpose-built AI solutions designed for specific communities and languages.

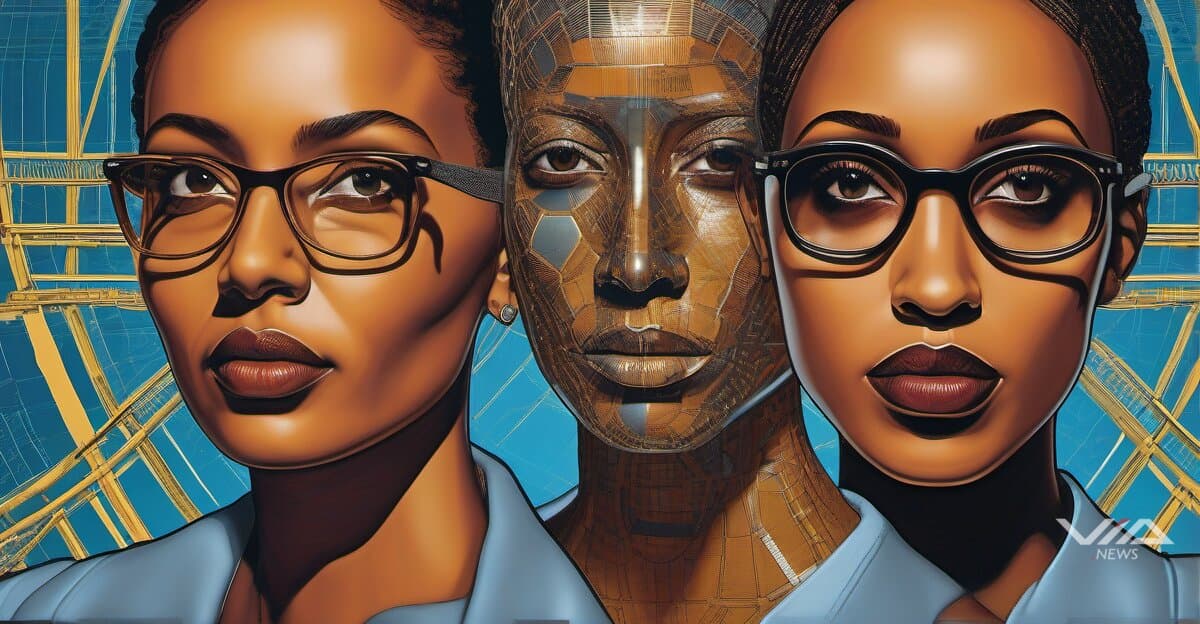

AI ethics researchers including Gebru and Abeba Birhane argue that resource-intensive giant models pose inherent safety risks. Models designed for undefined tasks lack clear safety parameters, they contend. The development process relies on massive data collection that critics characterize as data appropriation without consent.

Birhane identified "AI for good" rhetoric as a deflection strategy. Companies point to purported social benefits to counter grassroots resistance movements, she said. "AI for good allows companies to say 'Look, we're doing something good! Everything about AI is not bad. And you can't criticize us,'" Birhane stated.

The researchers challenge claims that giant models benefit marginalized communities. Gebru describes the dominant paradigm's development as "stealing data, killing the environment, exploiting labor." The environmental impact of training massive models compounds concerns about their sustainability.

Critics argue the tension between centralized giant models and specialized solutions represents a fundamental divide in AI development. Purpose-built models for specific languages and communities offer targeted benefits without the resource demands of general-purpose systems. But Big Tech's market dominance makes it difficult for these alternatives to secure funding and survive.

The displacement of small language AI organizations particularly affects Global South communities, where specialized models address linguistic and cultural needs that general-purpose systems may not adequately serve.