Olix will ship its first photonic chip product in 2027, targeting AI workloads that current GPU architectures struggle to handle efficiently. The startup joins MatX ($500M raised), Nio GeniTech (autonomous driving chips), and other specialized silicon ventures betting that domain-specific designs will outperform general-purpose accelerators.

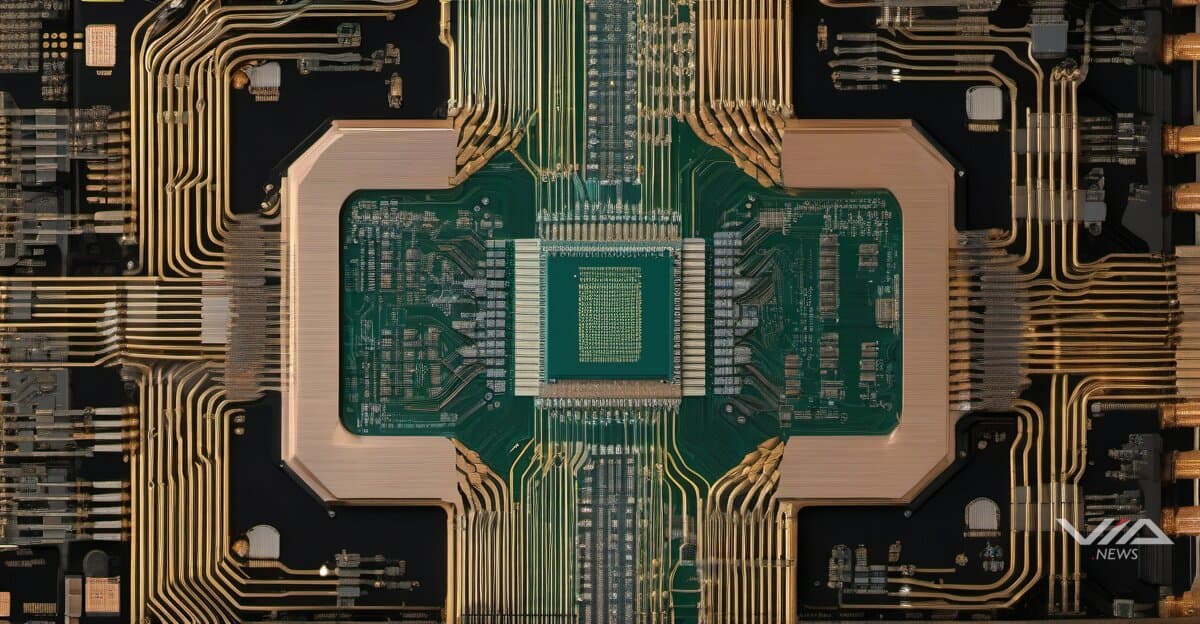

Photonic chips use light instead of electricity to move data, potentially reducing power consumption by 90% for certain matrix operations. Olix's 2027 timeline positions it to enter production as hyperscalers deploy next-generation AI infrastructure beyond Nvidia's H100/H200 GPU roadmap.

The specialized chip race follows $140B+ in AI funding rounds this year. OpenAI's $110B raise and Anthropic's $30B round signal investor confidence that current hardware bottlenecks require new approaches. MatX's $500M specifically targets transformer model inference, while Nio GeniTech focuses on automotive edge computing.

Design tooling advances support this complexity shift. Synopsys released OptoCompiler for photonic chip design, addressing a gap where traditional EDA tools assume electronic circuits. VC Functional Safety Manager targets automotive chip validation, reflecting demand for specialized verification flows.

Canada's $552M Canada Foundation for Innovation research infrastructure investment demonstrates government backing for next-generation semiconductor R&D. The funding supports university cleanrooms and testing facilities needed to prototype novel architectures outside established fabs.

Traditional players face pressure to adapt. GPU makers optimized for parallel compute now compete against ASICs tailored for specific neural network operations. Photonic approaches like Olix's could sidestep power density limits that constrain electronic chips at 3nm process nodes.

The 2027 target gives Olix 18 months to finalize designs and secure foundry capacity. Photonic chips require hybrid manufacturing—electronic control circuits plus optical waveguides—complicating production partnerships. Success depends on proving cost-per-inference advantages over mature GPU ecosystems with established software stacks.

Industry observers note that specialized chips need years to build developer adoption. Even superior hardware requires framework integration, kernel optimization, and customer validation before displacing incumbent solutions at scale.