The AI industry is entering a new phase of maturity — one defined not by breathless possibility alone, but by the friction of real-world deployment, governance conflict, and resource scarcity. Across autonomous systems, robotics, and foundation model development, capability gains are accelerating even as safety governance struggles to keep pace.

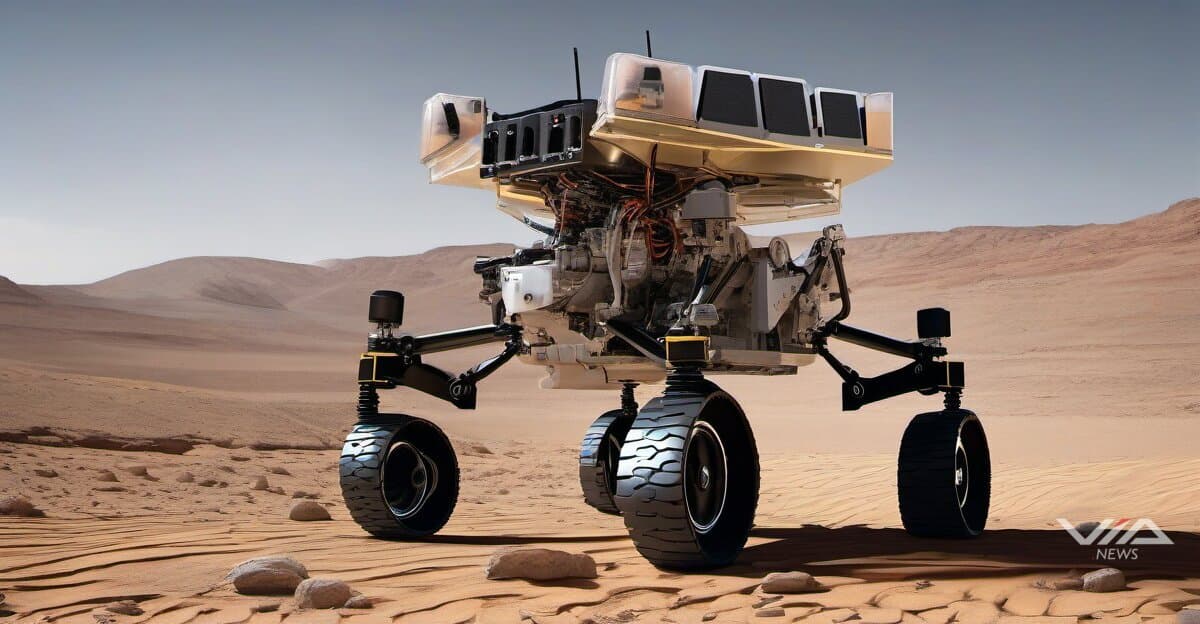

On the frontier of autonomous navigation, AI-driven systems are now operating in environments where human oversight is structurally impossible. Mars rover navigation represents one of the clearest examples: onboard AI must make real-time path decisions without Earth-based intervention, given communication delays of up to 24 minutes. These systems embody the promise of autonomous AI — and the stakes of getting alignment wrong in isolated, high-consequence settings.

Back on Earth, humanoid robotics deployments are moving from lab demonstrations to operational contexts. The convergence of more capable vision-language models with physical hardware is shortening the gap between prototype and production. Yet deployment at scale raises questions that benchmarks cannot answer: how do these systems behave in edge cases, and who bears liability when they fail?

Efficiency is emerging as the decisive competitive variable. Nvidia's Nemotron 3 Nano has been rated by Artificial Analysis as the most open and efficient model among peers of equivalent size, with leading accuracy — a signal that the performance-per-parameter race is intensifying. Similarly, Moonshot AI's Kimi K2 and the DAPO reinforcement learning framework reflect a broader industry push to extract more capability from constrained compute budgets. These are not incremental improvements; they represent a structural shift in how competitive advantage is built.

That shift is happening under resource pressure that even the largest players cannot ignore. OpenAI co-founder Greg Brockman has publicly acknowledged the core trade-off his company faces: every unit of compute used to serve hundreds of millions of ChatGPT users is compute not used to train the next generation of models. With the company raising $100 billion at a reported $800 billion valuation — while remaining unprofitable — the economics of scale are forcing strategic choices that will shape the trajectory of the field.

Talent consolidation is compounding these dynamics. The arrival of figures like Peter Steinberger at OpenAI signals continued concentration of engineering expertise at a handful of labs, narrowing the competitive field even as open-weight models attempt to redistribute capability more broadly.

Against this backdrop, governance tensions are hardening. Allegations that Google suppressed AI-generated medical safety warnings raise serious questions about the accountability of systems embedded in high-stakes domains. Litigation over AI voice cloning — where the synthetic reproduction of a person's voice without consent is becoming technically trivial — is testing the limits of existing intellectual property and privacy law. Meanwhile, reported friction between Anthropic and the Pentagon over the terms of military AI deployment illustrates the difficulty of reconciling safety-first research culture with the imperatives of defense procurement.

LoRA-based alignment methods — which allow fine-tuning of large models at a fraction of the cost of full retraining — offer a technical path toward more adaptable safety guardrails. But efficiency in alignment tooling does not resolve the institutional and political conflicts over who sets the guardrails and who enforces them.

What is becoming clear is that AI's competitive maturation is inseparable from its governance maturation. The labs that navigate both simultaneously — advancing capability while building credible accountability structures — are best positioned for the next phase. Those that treat safety as a constraint to be optimized around may find the cost of that choice compounding in ways that compute budgets cannot absorb.